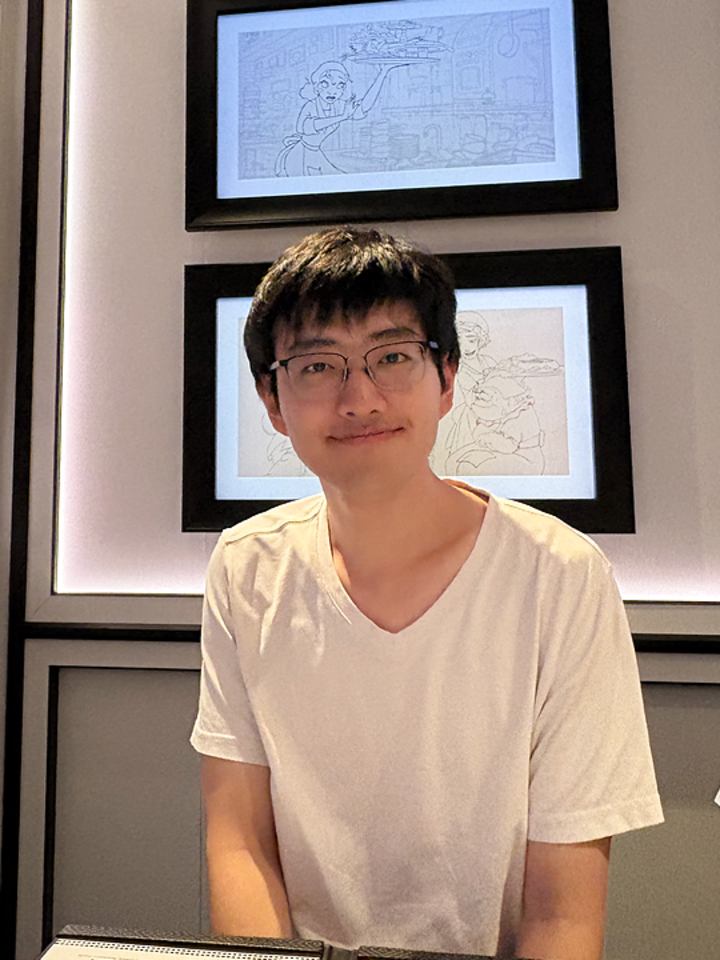

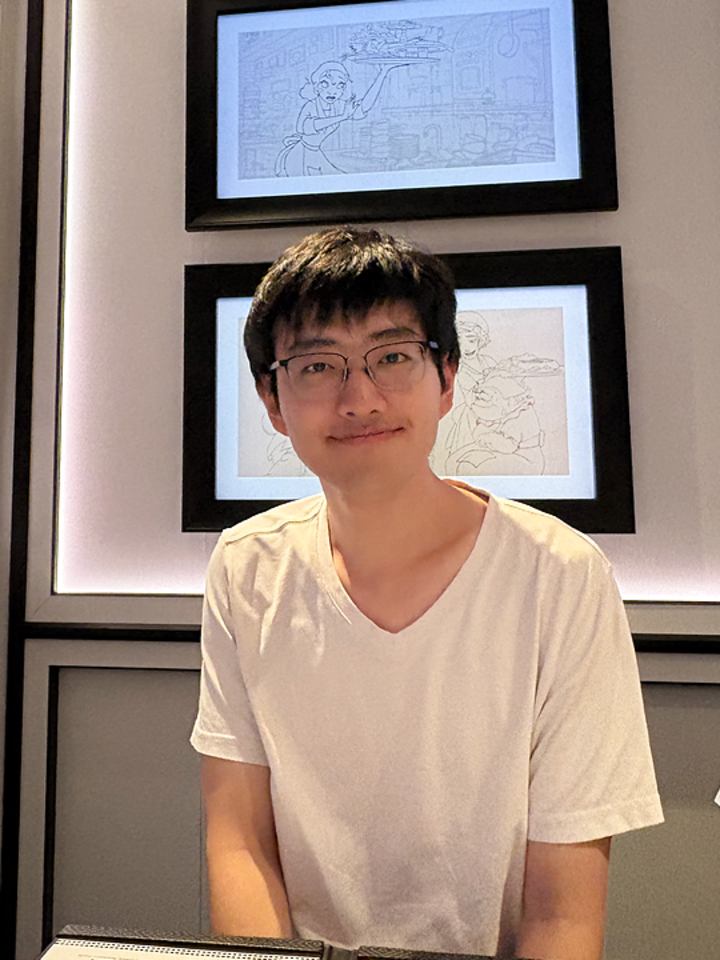

Hu Tianrun

Incoming PhD Student (Fall 2026)

School of Computing @ NUS

Research Engineer @ Smart System Institute (SSI)

"wait, this actually works?"

Incoming PhD Student (Fall 2026)

School of Computing @ NUS

Research Engineer @ Smart System Institute (SSI)

"wait, this actually works?"

Email: tianrunhu@gmail.com, tianrun.hu@u.nus.edu

Office: Smart System Institute, Innovation 4.0, #06-01C, 3 Research Link, Singapore 117602

I am an incoming PhD student (Fall 2026) at the School of Computing, National University of Singapore, where I have been awarded the A*STAR Graduate Scholarship (Computing). I am currently a Research Engineer at the Smart System Institute (SSI), supervised by Prof. David Hsu. I work closely with Dr. Hanbo Zhang while at NUS. My research focuses on mobile manipulation in the real world, particularly the coupling between perception and action. Previously, I was an undergraduate in Computer Engineering at the College of Computing and Data Science (CCDS), Nanyang Technological University under the guidance of Prof. Lam Siew Kei. During my undergraduate studies, I worked with Dongshuo Zhang.

My long-term research goal is to build mobile manipulators that act robustly in the unstructured real world. In the real world, perception and action shape each other. What the robot sees decides how it acts, and how it acts decides what it gets to see. Treating them in isolation breaks down the moment the environment stops cooperating. I am interested in jointly reasoning about perception and action, building principled frameworks that scale this kind of reasoning to the complexity of real environments.

Whether you're a fellow researcher looking for cooperation, an undergrad figuring out research or PhD applications, or just starting out and not sure where to begin, feel free to shoot me an email. I read and reply to every one, always happy to chat!